Another non-ENSO thing that affects seasonal forecasts

In a stable, non-changing climate, any trend would be tiny and random—useless as a predictor for the future. However, we don’t live in a stable climate. Due to human emissions of greenhouse gases and also to natural, decadal changes, there are detectable trends in our climate.

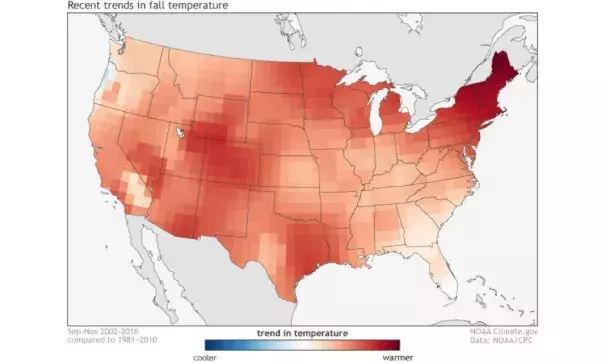

Forecasters use the trend in temperature across the United States to get important insights when making seasonal forecasts. For instance, if a certain part of the country has a large warming trend during the summer, a forecaster might be more inclined to tilt the odds toward a warmer-than-average summer in that location. In fact, using these trends may give forecasters more skill for temperature forecasts than using the prediction of ENSO (Peng et al 2012). This relationship holds less weight for precipitation, though, as the prediction of ENSO is often (but not always) a better determinant of precipitation patterns in a given season than long-term trends.

At this point, dedicated readers of the blog may be thinking “this seems too simple, what’s the catch? You always like to have a catch.” Smart readers! The catch is that there are plenty of different ways of figuring out what the trend is. So which is best?

Average fall temperature for a point in the southwestern United States from 1981-2016. The climate trends used by the Climate Prediction Center takes the average fall temperatures over the past 15 years (2002-2016) and subtracts the average fall temperatures from 1981-2010. Forecasters use this information as a predictor for future seasonal temperatures. NOAA Climate.gov image using GHCN data.

Related Content